Enterprise AI ROI in 2026 has a structural problem, and it isn't the technology. Fewer than one-third of AI decision-makers can tie the value of AI to their organization's P&L, according to Forrester's 2026 Technology and Security Predictions — and separately, only 15% reported any EBITDA lift from AI in the prior twelve months. If you're a CEO or CFO who just checked whether your company is in that 85%, you already know the answer — and you suspect the official explanation is wrong. More time needed. Better models are coming. Adoption is still scaling.

It is wrong. The problem is that your organization bought AI and forgot to buy the accountability architecture required to turn it into money.

The models are capable enough. The gap is structural: enterprises have deployed AI into organizational architectures where no one owns the financial outcome, no baseline existed before deployment, and no mechanism converts productivity gains into board-reportable results. The adoption metrics accumulate. The P&L doesn't move. And in Q2 2026, boards are done being patient about the difference.

The companies generating strong AI returns — Deloitte's 2026 research identifies them as 34% of enterprises, using AI to deeply transform their products, processes, or business models — aren't running better tools than the other 66%. They've built different governance structures. Specifically, they treat AI as a portfolio of capital investments, each with defined baselines, named P&L owners, proof standards, and kill criteria. That's not a technology decision. It's an organizational design decision.

This article names the three structural failure modes responsible for the majority of AI ROI gaps in 2026 — and gives CFOs a diagnostic they can apply before the next board meeting.

The Adoption Trap: Why AI Activity Metrics Don't Produce AI Investment Returns

The most expensive mistake in enterprise AI isn't deploying the wrong tool. It's measuring the right tool with the wrong metric.

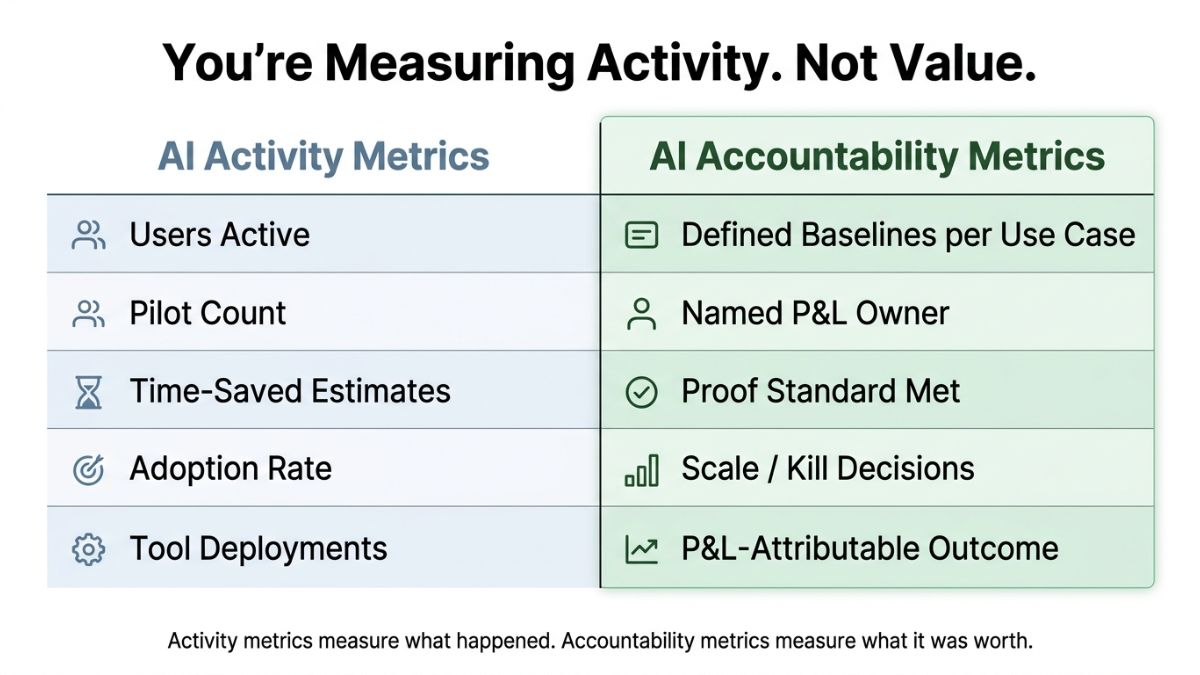

Organizations default to adoption metrics — seats licensed, usage hours logged, productivity self-reports — because these are the numbers AI vendors make easy to track. They accumulate quickly and look like progress. They are not progress. They measure activity; they don't measure value. That distinction matters enormously when a board asks what the company got for $8 million in AI spend.

Strategy practitioner Rujuta Singh, writing on LinkedIn, identified the root cause plainly: "Tools feel like progress. Problems feel like homework." The organizational pressure to demonstrate AI engagement — to IT leadership, to boards, to employees — creates powerful incentives to count the wrong things. Adoption metrics satisfy that pressure without requiring anyone to answer the harder financial question.

The harder question is this: IBM's Q4 2025 Think Circle report found that 79% of executives report AI productivity improvements, while only 29% can measure AI ROI with confidence. That 50-percentage-point gap between experiencing productivity effects and connecting them to financial outcomes is not a measurement lag. It is a structural problem. The translation mechanism from operational efficiency to a P&L line doesn't exist in most enterprises, and time alone doesn't create it.

What fills the gap is what analysts are calling AI Activity Theater: the organizational phenomenon in which adoption metrics accumulate without financial accountability structures, producing the appearance of transformation while value leaks at every stage between deployment and results. One enterprise practitioner described a $10 million LLM deployment that generated zero revenue after nearly a year — every activity metric presumably fine, the P&L unmoved.

Scale amplifies dysfunction. AI deployed into misaligned organizational architecture doesn't self-correct as adoption deepens — it accelerates the rate of unmeasurable spending. The companies that deployed the most AI tools without governance infrastructure didn't get ahead of the ROI problem. They compounded it.

Three Structural Failure Modes Behind Most AI ROI Gaps

The trap has three distinct shapes. Which one an organization is in determines which intervention works.

What causes enterprise AI to fail financially? The answer isn't technology. It's one or more of three structural failures: no pre-deployment baseline, no named P&L owner, and no mechanism to convert productivity gains into reportable financial outcomes. Most failing AI investments exhibit all three simultaneously.

Failure Mode 1: The Baseline Gap

AI was deployed without defined pre-deployment performance baselines. Without a baseline — how long did the claims process take before AI, how many errors occurred, what was the cost per transaction — there is no proof. Time-saved estimates become fabrications. Productivity claims become unverifiable. When the board asks for evidence, the answer is an adoption metric dressed up as an outcome.

An anonymous AI evaluator at a consulting firm described the practical reality: most "time saved" calculations are invented. Teams estimate that AI tools save X hours per week without measuring how long tasks actually took before deployment. The result, in their words, is "vibes-based decision making disguised as data-driven strategy." It doesn't survive board scrutiny because it shouldn't.

The intervention is straightforward, if retroactively uncomfortable: establish the baseline now, even mid-deployment. A retrospective baseline — imperfect but documented — is more defensible than none.

Failure Mode 2: The Ownership Void

AI use cases were deployed with no named P&L owner and no defined kill criteria. This is the most common failure mode in enterprise AI reviews, and the least acknowledged.

The ownership void frequently surfaces as shadow AI: employees using tools outside sanctioned channels because official deployment is too slow or too restricted. One practitioner described the dynamic: "You can't forbid it, but you also can't allow it." The trap isn't the shadow usage itself — it's that shadow usage produces outcomes no one owns. There is no named person accountable for connecting AI activity to a financial result, and therefore no mechanism for either capturing value or stopping waste.

The diagnostic question: if a use case fails to meet its proof standard, who makes the kill decision? If that question doesn't have a name attached to it, the organization is in Failure Mode 2.

Failure Mode 3: The Translation Break

Productivity gains exist. The financial outcome doesn't materialize. This is not coincidence — it's a governance gap. The mechanism that converts operational efficiency to a P&L line is absent.

Consider the non-technical process user who saves five hours per week using AI — a real and documented outcome. That outcome is invisible to the board unless someone with P&L responsibility owns the translation: those hours represent $X in labor reallocation, producing $Y in capacity that was redirected to $Z in revenue-generating activity. Without that chain — and the accountability structure that enforces it — five hours saved is a productivity statistic with no financial address.

This is the IBM gap made operational: 79% of organizations experiencing productivity improvements, 29% able to measure ROI with confidence. The other 50% aren't measuring poorly. They're not measuring at all, because no governance architecture requires the translation.

The three failure modes compound. A $10 million LLM deployment with zero revenue typically exhibits all three: no pre-deployment baseline, no named P&L owner, no conversion mechanism from token usage to business outcome. Most failed AI investments aren't single-mode failures.

The diagnostic question: if your CFO had to present AI ROI to the board next Thursday, could they name a specific dollar outcome — not time saved, not users active, not pilots completed — that would survive scrutiny?

What the 34% Do Differently: Enterprise AI Value Through Governance

Deloitte's finding that only 34% of organizations are genuinely reimagining the business with AI — creating new products, reinventing core processes, or fundamentally changing business models — is cited in virtually every analysis of enterprise AI performance. What almost no analysis examines is the interior of that 34%: the structural specifics of what they do differently.

The minority generating stronger returns doesn't have better tools. They have a different organizational logic for governing AI investment, operating across three specific dimensions.

First, they treat AI as a portfolio of capital investments, not a series of experiments. Each use case has a defined proof criterion — a specific financial outcome that must be achieved within a defined timeframe to justify continued investment. This is not AI enthusiasm constrained by governance. It is governance functioning as the value-creation mechanism, determining which bets scale and which get killed. Use cases that can't demonstrate proof don't consume the next quarter's budget.

Second, the 34% establish accountability before deployment, not after. A named business owner — not an IT lead, not an AI team — carries P&L responsibility for each use case. That ownership structure is what makes the Translation Break impossible to ignore. When a VP of Operations owns the outcome of an AI deployment in claims processing, the question "did this produce financial results?" has someone who must answer it. When ownership defaults to a technology team, the question becomes rhetorical.

Third, they design for proof, not adoption. McKinsey's 2025 State of AI research found that high performers are nearly three times as likely to have fundamentally redesigned workflows as part of their AI efforts. That redesign isn't incidental to success — it's the mechanism by which AI activity becomes an attributable financial outcome. Workflow redesign without accountability architecture still produces the IBM gap: better processes that can't be connected to P&L outcomes. The redesign is necessary; the governance is what makes it sufficient.

AI governance practitioner David Roldán Martínez, writing on LinkedIn, framed the underlying logic directly: "If AI isn't governed like capital allocation, it won't behave like one." The 34% govern AI like capital allocation — portfolio framing, proof criteria, ownership structure, and kill discipline. The 66% govern AI like software rollouts, which produces software rollout metrics and no financial outcomes.

CEO involvement, which BCG's 2026 research associates with stronger AI outcomes — finding that deeply engaged C-level executives are significantly more likely to rank among top AI performers — matters only when it produces structural accountability. A CEO who reviews AI dashboards monthly but hasn't assigned P&L ownership to use cases has added involvement without adding accountability. The structural conditions, not executive attention alone, generate the returns.

A practical first move for organizations in the 66%: identify the three AI use cases representing the highest current spend, and answer three diagnostic questions — baseline, owner, proof standard — for each. The gaps that surface in that exercise define the intervention priority, not the technology roadmap.

The Board Defense Model: Making AI Transformation Strategy Auditable

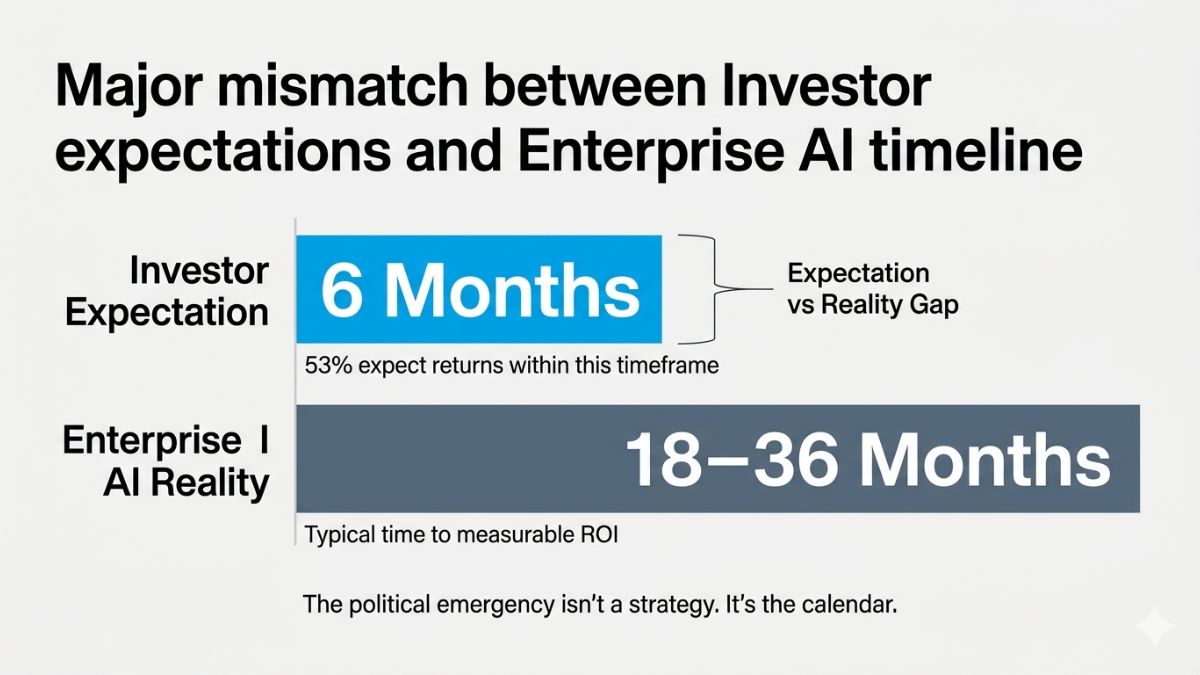

The urgency here is not theoretical. Teneo's Vision 2026 CEO and Investor Outlook Survey found that 53% of investors expect positive AI returns within six months or less — while 84% of large-cap CEOs (those with $10 billion or more in revenue) say new AI initiatives will take longer than six months to deliver. That gap isn't a strategy misalignment. It's a political emergency playing out in Q2 2026.

Forrester projects that 25% of planned 2026 AI spend will be deferred to 2027. That deferral won't come from boards concluding that AI doesn't work. It will come from boards concluding that specific executives cannot demonstrate what they got for the money already spent. The Forrester number is a forecast of accountability consequences, not a forecast of technology outcomes.

The CFO's board defense argument has three components, and all three must be present for it to hold.

- Defined baselines: Which use cases have defined baselines against which outcomes can be measured? Not every use case — the specific ones representing material investment. The answer cannot be "we're tracking usage." It must name a business process, a pre-deployment performance measure, and a post-deployment comparison.

- Named P&L ownership: Who owns the P&L outcome for each material use case? Not the AI team, not IT. A business-side executive with existing P&L accountability. The name, the use case, and the proof standard must be documented.

- Proof and kill standards: What is the proof standard for scaling versus killing a use case? What financial outcome, achieved within what timeframe, justifies continued investment? What failure condition triggers the kill decision — and who makes it?

The difference between a board conversation that holds and one that doesn't is the difference between "we have 5,000 AI users and productivity is up" and "use case X reduced claims processing cost by $2.3 million against a $400,000 investment, and use case Y was killed in month four when it failed to meet the proof standard established at deployment."

The second answer doesn't require perfect outcomes. It requires accountability architecture.

Conclusion: AI ROI in 2026 Is an Organizational Design Problem

AI ROI in 2026 is an organizational design problem. The technology is sufficient. The governance infrastructure — baselines, ownership, translation mechanisms, proof criteria — is not.

The three failure modes described here — the Baseline Gap, the Ownership Void, and the Translation Break — don't arrive sequentially. Most organizations exhibit all three simultaneously, which is why more tools don't fix the problem and more time doesn't either. Scale applied to misaligned architecture produces more misaligned spend, not eventual returns.

The question boards are about to ask in Q2 2026 is no longer "are we investing in AI?" It's "can you tell us what we got for it?" The accountability architecture — baselines, named owners, proof standards — is not a governance improvement. It is the argument. Without it, the only available answer is adoption metrics, which no longer satisfy.

The companies that will own AI as a durable competitive capability aren't the ones who deployed the most tools. They're the ones who built the accountability architecture to know which tools are working — and had the discipline to kill the ones that weren't.

Frequently Asked Questions

What does AI ROI actually mean for enterprise CFOs in 2026?

AI ROI refers to measurable financial outcomes attributable to AI investment — not usage rates or productivity self-reports, but P&L-line results. For CFOs in 2026, it means the ability to name a specific dollar outcome per use case, tied to a pre-deployment baseline and owned by a named business executive.

Why do most enterprise AI pilots fail to produce measurable returns?

MIT's 2025 NANDA study found that 95% of enterprise AI initiatives show no measurable business return — though this figure specifically concerns custom enterprise AI tools failing to reach production, and the methodology has been contested by some researchers. The primary cause is structural, not technological: organizations deploy AI without pre-deployment baselines, without named P&L owners per use case, and without a governance mechanism to convert productivity gains into financial outcomes.

How should a CFO measure AI impact before the next board meeting?

Start with three diagnostic questions for each material use case: Does a pre-deployment baseline exist? Who owns the P&L outcome by name? What is the proof standard for scaling versus killing the use case? If any question lacks a documented answer, that gap is the priority — not additional deployment.

What is the difference between AI adoption metrics and AI ROI?

Adoption metrics measure activity — seats licensed, usage hours, self-reported productivity gains. AI ROI measures financial outcomes — cost reduction, revenue attribution, labor reallocation with documented P&L impact. IBM's Q4 2025 Think Circle report found 79% of executives report productivity improvements but only 29% can measure ROI with confidence. The gap is structural.

What do high-performing enterprises do differently to generate AI investment returns?

Deloitte's 2026 research identifies 34% of enterprises as genuinely reimagining the business with AI. They share three governance practices: treating AI as a capital investment portfolio with defined proof criteria per use case; assigning P&L ownership to business-side executives before deployment; and designing workflows for attributable outcomes rather than adoption volume.